LLMs

sari and

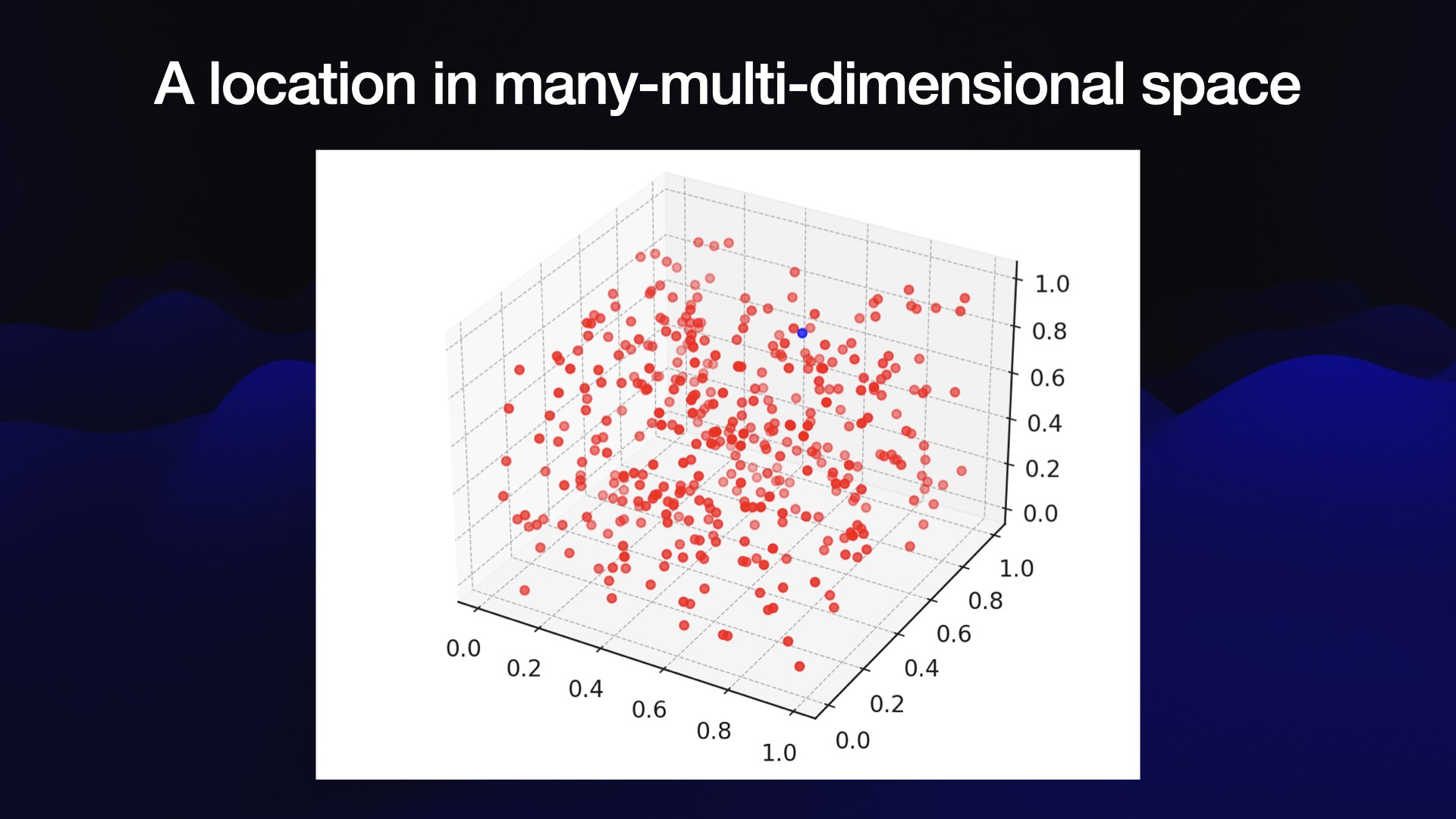

LLMs

sari and

"A key challenge of (LLMs) is that they do not come with a manual! They come with a “Twitter influencer manual” instead, where lots of people online loudly boast about the things they can do with a very low accuracy rate, which is really frustrating..."

Simon Willison, attempting to explain LLM