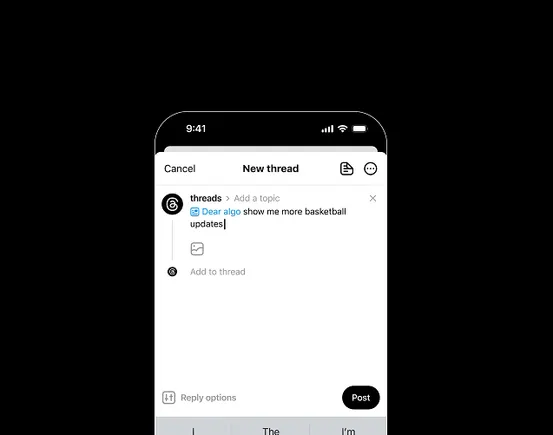

algorithmic choice & control

However, many tools for authoring personal classifiers on so-cial media are designed for users with heightened motivation , such as community moderators [ 12 , 40 ] and high-profile content cre-ators [ 41 ]. In focusing on these users, such tools neglect the usage patterns of general social media users, who spend significant time on social media... See more

End User Authoring of Personalized Content Classifiers: Comparing Example Labeling, Rule Writing, and LLM Prompting

Bluesky’s approach to curation is based on the idea that people “must have control over

our algorithms if we’re going to trust in our online spaces” (The Bluesky Team, 2022).

The platform’s support for custom feeds supports this mission, offering “essentially a

farmers’ market of algorithms” (Graber quoted in SXSW, 2025, 54:18). Yet custom feeds

exist... See more

our algorithms if we’re going to trust in our online spaces” (The Bluesky Team, 2022).

The platform’s support for custom feeds supports this mission, offering “essentially a

farmers’ market of algorithms” (Graber quoted in SXSW, 2025, 54:18). Yet custom feeds

exist... See more

We found that writing prompts generally allowed participants to create personal content filters with higher performance more quickly, primarily driven by having the highest recall despite comparable precision across conditions.

End User Authoring of Personalized Content Classifiers: Comparing Example Labeling, Rule Writing, and LLM Prompting

As a result, for many users to realistically participate in curation, tools for authoring classifiers should support rapid initialization . As end users often-times engage with internet content as a leisure activity, a successful tool should allow them to quickly and intuitively build an initial classifier with decent performance.

End User Authoring of Personalized Content Classifiers: Comparing Example Labeling, Rule Writing, and LLM Prompting

End User Authoring of Personalized Content Classifiers: Comparing Example Labeling, Rule Writing, and LLM Prompting

arxiv.orgIn this work, we compare three strategies—(1) example labeling, (2) rule writing, and (3) large language model (LLM) prompting—for end users to build personal content classifiers